Meta Fights Back Against Celebrity Scam Ads with Facial Recognition Technology

A New Weapon in the Fight Against Scams

Facebook and Instagram’s parent company, Meta, is taking a stand against the pervasive problem of celebrity scam ads. These ads often feature the unauthorized use of celebrities’ images to promote fraudulent investment schemes and cryptocurrencies. To combat this, Meta is introducing facial recognition technology to bolster its existing AI-powered ad review system.

How It Works

This new technology will compare images in suspected scam ads with the official profile pictures of celebrities on Facebook and Instagram. If a match is confirmed, and the ad is deemed a scam, it will be automatically removed. Early tests have shown promising results, prompting Meta to expand the system and notify a larger group of public figures who have been targeted by these “celeb-bait” scams.

The Rise of Deepfakes

The issue of celebrity scams has plagued Meta for years. In the 2010s, personal finance expert Martin Lewis even took legal action against Facebook due to the rampant misuse of his image in scam ads. While Facebook subsequently implemented a scam ad reporting button and donated £3m to Citizens Advice, the problem persisted.

The emergence of deepfake technology has further complicated matters. Deepfakes create incredibly realistic computer-generated likenesses or videos, making it appear as if celebrities are endorsing products or services they have no association with.

Pressure to Act

Meta has faced mounting pressure to address this growing threat. Recently, Martin Lewis urged the UK government to grant Ofcom, the UK regulator, greater powers to tackle scam ads, citing an instance where a deepfake interview with Chancellor Rachel Reeves was used to deceive people into sharing their bank details.

Meta’s Commitment to Safety

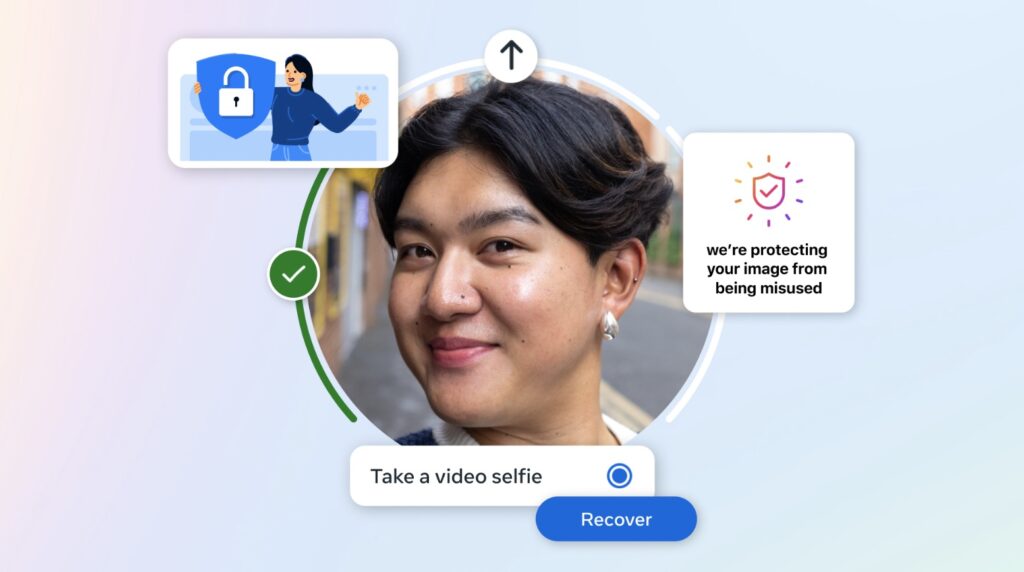

Meta acknowledges the scammers’ relentless efforts to evade detection and aims to enhance industry defenses by openly sharing its approach. In addition to combating scam ads, Meta is also testing facial recognition technology to help users regain access to locked accounts through video selfies, offering a faster alternative to traditional ID verification methods.

Addressing Privacy Concerns

Meta is aware of the controversy surrounding facial recognition technology. After previously using and then abandoning the technology in 2021 due to privacy and accuracy concerns, Meta emphasizes that video selfies will be encrypted, securely stored, and not publicly displayed. Facial data used in the comparison process will be deleted after the check is complete. Furthermore, the system will not be immediately available in regions requiring regulatory approval, such as the UK and EU.